VMware has two different storage architectures:

OSA or Original Storage Architecture is the current name for the “old” VMware vSAN published in March 2014. (vSAN 5.5).

ESA or Express Storage Architecture is the “new” vSAN, available since vSAN 8. This version has an entirely different foundation and significant advantages than the OSA. There are still some features that are not on par with OSA, and I guess they will go away.

Difference between OSA and ESA

OSA is using disk groups with caching devices. It can be configured with various hardware, including spinning disks.

ESA uses a storage pool instead of disk groups. This makes it more storage effective.

Because of the new filesystem, it is hardware optimized for all-NVMe drives.

Here is a table that shows the main differences between vSAN the old and new architecture:

| Feature | OSA | ESA |

|---|---|---|

| Filesystem | vSAN Distributed FS (vDFS) | Log Structured FS (vSAN LFS) |

| Drives | SSDs, HDDs, and hybrid | Certified NVMe SSDs |

| Caching Device | 1 Cache drive per Diskgroup | No Caching Drive |

| Hardware requirements | Varies | vSAN ReadyNodes |

| Performance | Good | Excellent |

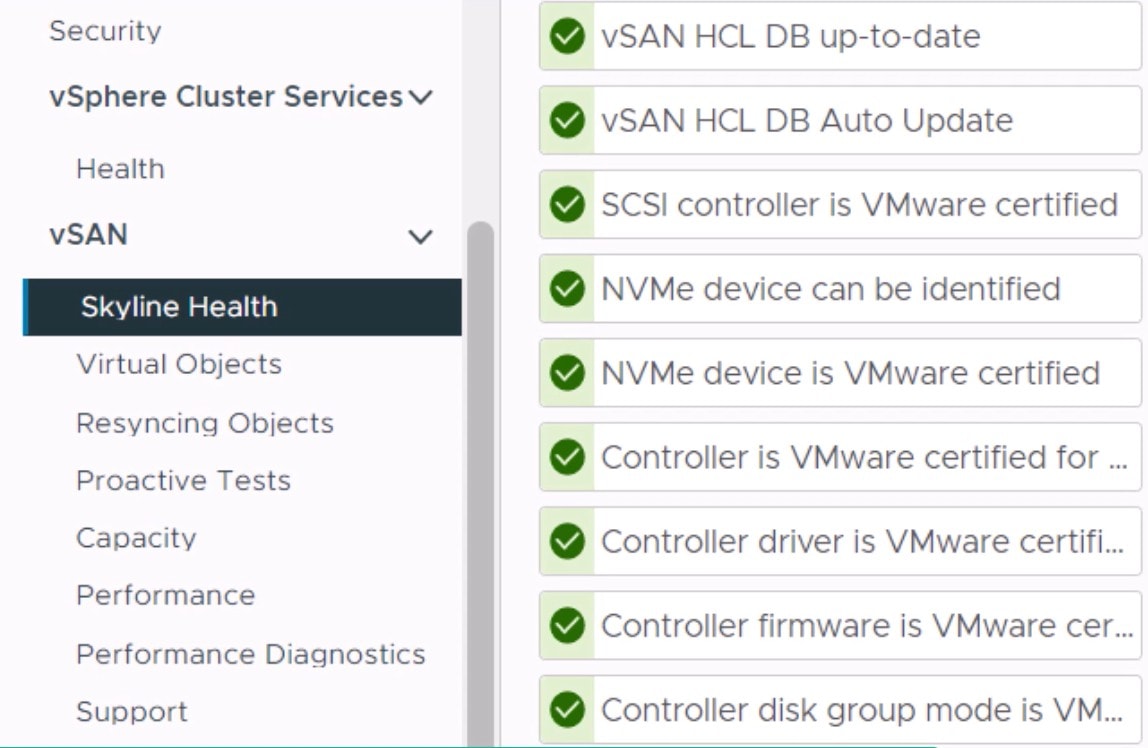

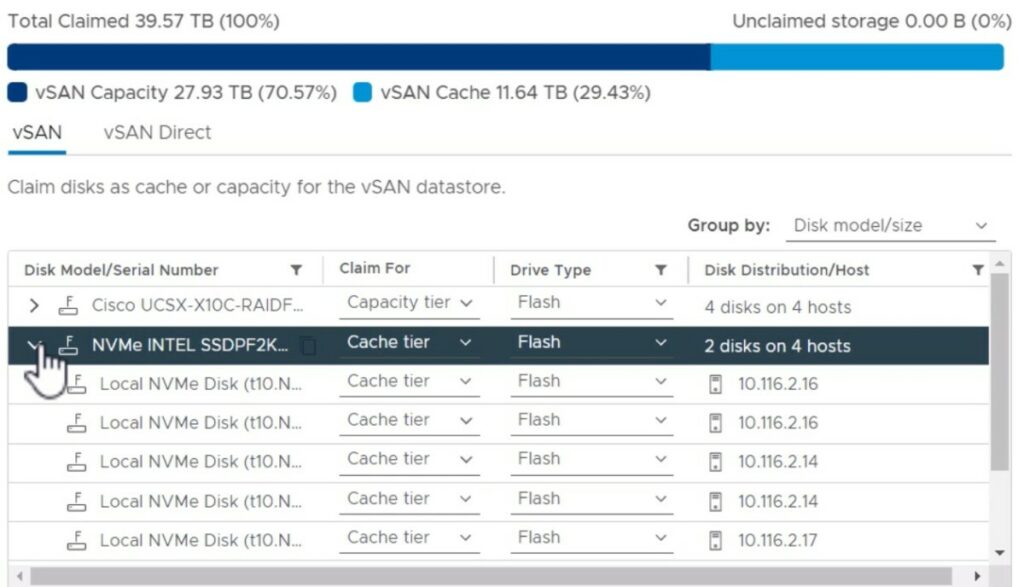

The following picture shows an OSA configuration with Caching disks and capacity disks on a UCS X-Series node.

vSAN ESA Hardware Requirements

The ESA Hardware requirements can be found at the vSAN ESA Hardware Quick Reference.

Different CPU cores, Memory, Capacity and Network connections are required for the different AF sizes.

The main part of those requirements is the drives.

The drives should be:

– NVMe with 3 Disk Writes Per Day (DWPD)

– Minimum of 1.6 TB

Which one should you use? OSA or ESA?

The configuration of an OSA system can be a bit complex because of the disk groups and needed caching devices.

vSAN ESA is designed to be simple (No Caching device and disk groups), and it can be a bit more expensive due to high-performance NVMe drives. When the NVMe drives get more capacity, and the price drops, ESA will be the default for vSAN because of all the advantages.

UCS X210c nodes are certified to run vSAN OSA and ESA. See VMware Compatibility Guide.

I suggest using the ESA configuration because the X-Series node only has six drive slots. This way, you will have 6x NVMe capacity drives, suitable for all kinds of workloads due to the speed and low latency.

Of course, it all depends on your budget, workload and needed capacity. Keep in mind the capacity sizes of the NVMe drives will grow fast.

There are two other blogs on this site about vSAN on UCSX:

– vSAN on UCSX: Is it possible?

– vSAN on UCSX: ReadyNode.